Web scraping is an automated method to take out large amounts of data from websites. Data (generally in HTML format) is reshaped into organized formats like spreadsheets or databases for further use. It can be executed through online tools: API or custom code. A major website like Google and Facebook provides APIs for structured data access. Web scraping is often used for sites that lack such options or restrict data access.

Web scraping comprises two main elements: a crawler and a scraper! Let’s understand them with a table.

| Feature | Crawler | Scraper |

| Meaning | A program that moves through websites by following links | A tool that collects specific data from web pages |

| Main purpose | To find and discover web pages | To extract the required information |

| How it works | Visits pages and follows links automatically | Reads pages and pulls selected data |

| Type of output | Website URLs and page locations | Text. Images. Prices. Other data |

| Project role | Used at the start to find pages | Used after crawling to collect data |

| Easy example | Exploring all aisles in a store | Picking only the needed items from the shelves |

How Web Scrapers Work?

Web scrapers can take out all the data on specific sites or the particular data that a user wants. It’s great if you determine the data you want so that the web scraper only extracts that data quickly. Let’s understand this with an example: You might want to scrape an Amazon page for the type of refrigerators available, but you might only need the data about the models of different refrigerators and not the customer reviews.

Web Scraping End-to-End Flow

| Step | What Happens |

| Input | You give website URLs and tell what data you want |

| Visit Page | Scraper opens each URL like a web browser |

| Load Content | Website page is downloaded (JavaScript loads if needed) |

| Read Page | Scraper understands the page structure |

| Collect Data | Only the required data is picked |

| Save Data | Data is cleaned and saved in these formats: CSV. Excel. JSON. |

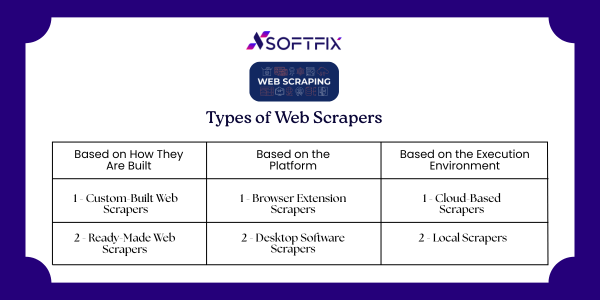

Types of Web Scrapers

Web scrapers can be grouped in different ways! It is based on these factors: How they are built. Where do they run? How they are used.

Based on How They Are Built

Custom-Built Web Scrapers

- These scrapers are made from scratch using programming languages such as Python or JavaScript.

- They need sound coding skills and technical knowledge.

- They provide complete control and can be customized for any particular requirement.

- More advanced features require deeper technical expertise.

Ready-Made Web Scrapers

- These are pre-developed tools that can be downloaded and used directly.

- They generally come with easy-to-use interfaces and built-in features.

- They are best for beginners or users with little technical background.

Based on the Platform

Browser Extension Scrapers

- These scrapers are added as extensions in web browsers like Chrome or Firefox.

- They are quick to install and very easy to use.

However, they are limited by browser capabilities and are not suitable for large or complex scraping tasks.

Desktop Software Scrapers

- These are self-sufficient applications installed on a computer.

- They provide more advanced features than browser extensions.

- They are not limited by browser limits but require system installation and resources.

Based on the Execution Environment

Cloud-Based Scrapers

- These scrapers run on cloud servers provided by service providers.

- They do not use your computer’s CPU or memory.

- You can continue working while scraping runs in the background.

Local Scrapers

- These scrapers run directly on your own system.

- They depend on your computer’s hardware resources.

- Heavy scraping tasks may slow down your system.

Why is Python a Popular Programming Language for Web Scraping?

Python seems to be in fashion these days! It is the most popular language for web scraping as it can handle most of the processes easily. It also has a variety of libraries that were created specifically for Web Scraping. Scrapy is a very famous open-source web crawling framework that is written in Python.

It is great for web scraping as well as extracting data using APIs. Beautiful Soup is another Python library that is highly suitable for Web Scraping. It creates a parse tree that can be used to extract data from HTML on a website. Beautiful Soup also has multiple features for navigation and modifying these parse trees.

What is Web Scraping Used for?

Web Scraping has different applications across different industries. Let’s check out some of these now!

1. Price Monitoring

Web Scraping can be used by companies to scrap the product data for their products and competing products as well to see how it impacts their pricing strategies. Companies can use this data to fix the ideal pricing for their products so that they can obtain maximum revenue.

2. Market Research

Web scraping can be used for market research by organizations. Supreme web-scraped data received in large volumes can be very useful for organizations in analyzing consumer trends and understanding which direction the company should move in the future.

3. News Monitoring

Web scraping news sites can provide detailed reports on the current news to the company. This is even more essential for companies that are frequently in the news or that depend on daily news for their day-to-day functioning. After all, news reports can make or break a company in a single day!

4. Sentiment Analysis

Sentiment Analysis is a must if companies want to understand the general sentiment for their products among their consumers. Companies can use web scraping to collect data from social media websites such as Facebook and Twitter to what the general sentiment about their product. This will help them in creating products that people desire and moving ahead of their competition.

5. Email Marketing

Companies can also use Web scraping for email marketing. They can collect Email IDs from different sites using web scraping and then send bulk promotional and marketing Emails to all the people owning these Email IDs.

Start your digital transformation today with Softfix — smart solutions built to scale your business.

Final Words

Web scraping is a great way to collect useful data from the web when done responsibly. The Right Tools. Clear Goals. Ethical Practices. By using all these, businesses can gain valuable insights and improve decision-making. Web scraping remains a useful and impactful solution as data continues to drive growth.